Will AI Level the Linguistic Playing Field for Researchers?

AI tools could help break down barriers for researchers who aren’t native English speakers. But not if poorly thought-out publisher policies reinforce the status quo.

AI tools could help break down barriers for researchers who aren’t native English speakers. But not if poorly thought-out publisher policies reinforce the status quo.

For decades, academic publishing has quietly created a significant burden for certain authors: before your research can be considered “fit to publish,” it must first sound as if it were written by a native English speaker. Many publishers ask authors to use costly English editing services before or after peer review because their drafts don’t meet this standard.

But with the rise of large language models (LLMs) such as ChatGPT, Google Gemini, and others, non-English-speaking researchers might have an alternative. Can artificial intelligence finally level the playing field? Or will publishers’ restrictions on AI-use continue this tradition of inequity?

Professional English editing doesn’t come cheap. Editing services typically cost between $23 and $44 per 1,000 words, a steep fee for researchers in low-resource settings or from institutions without strong publication support. For many early-career scholars across Asia, Africa, or Latin America, this expense can equal a month’s stipend or more.

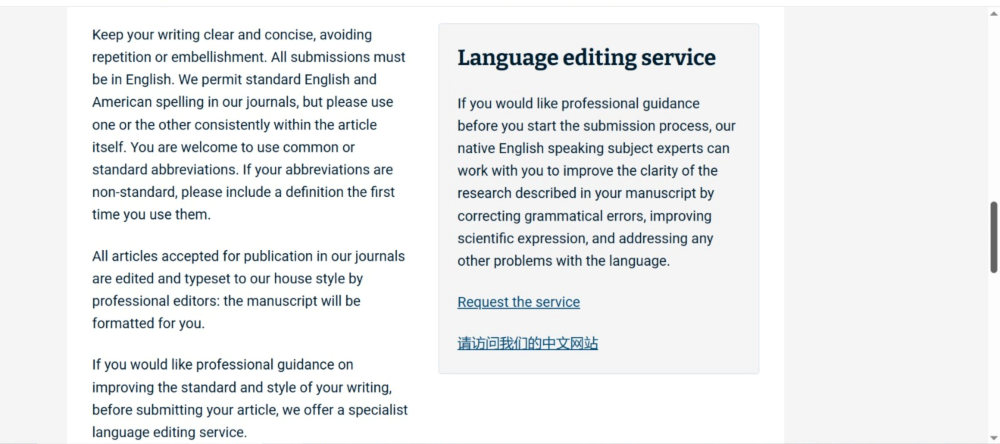

If the same language-editing services are purchased from the major publishers where researchers typically submit their papers, the cost is even higher. At Elsevier, standard English-editing services cost approximately $165 for a 1,000-word manuscript. At Springer Nature, services covering grammar, clarity, and style to improve readability and flow start at $91. Wiley does not publish its pricing openly; authors must upload a manuscript to receive a customized quotation.

In sharp contrast, comparable basic writing and language-editing support, without word limits, is available free of charge through ChatGPT, with both the Go and Plus versions initially launched free of charge and with ChatGPT Go still offered at a lower rate in India and other countries than in the United States. Suddenly, sophisticated language support is no longer a privilege reserved for well-funded labs or Western universities. But will uploading the manuscript on sites like ChatGPT be acceptable to publishers? We can anticipate the answer!

As AI tools become commonplace, many publishers have rushed to issue guidelines on their use. It’s fair for publishers to hold authors accountable for their use of LLM; but in practice, policies are often prohibitive and sometimes confusing. Some publishers permit AI for grammar correction but not for rephrasing. Others ban AI-generated text entirely, regardless of how it’s used. Some, like Springer Nature, mostly allow AI-assisted copyediting, while others offer vague or inconsistent guidelines.

Policies often prohibit listing AI tools as co-authors. But when LLMs are used purely as writing assistants, authorship should not be a concern, just as human editors are not considered co-authors for improving language or clarity.

For researchers already navigating language barriers, the ambiguity of these policies adds yet another obstacle. What counts as “AI assistance”? How much editing is too much? The lack of clarity leaves authors unsure and anxious. Restricting AI-based editing essentially preserves a status quo that privileges those who can afford human help.

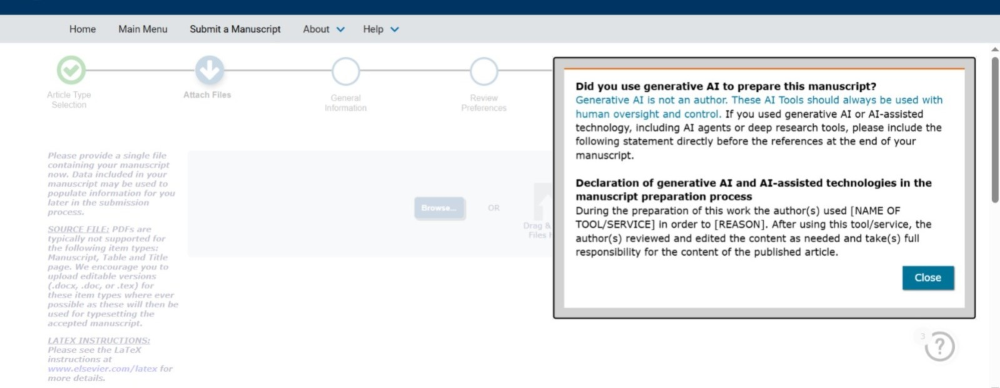

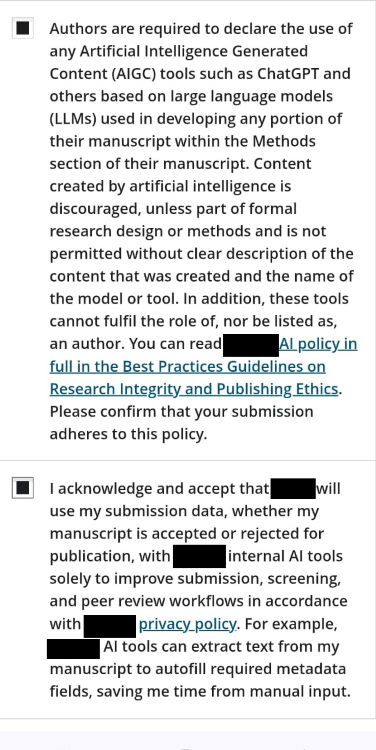

Furthermore, many publishers require authors to disclose any use of AI tools for improving language or clarity. Figures 1A and 1B show examples of declarations required during manuscript submission for two journals.

But can authors truly feel comfortable making such disclosures? While transparency is ethically sound, researchers may fear that revealing AI assistance could bias reviewers or editors against their work, undermining its perceived scientific merit. For many researchers in the Global South—who already face systemic bias and prejudice—the disclosure of the use of AI tools could trigger stricter editorial scrutiny and increase the risk of a desk rejection, forcing them to choose between being ethical and being safe.

For non-native English-speaking researchers, linguistic barriers are not merely stylistic—they’re structural. A manuscript might be rejected or delayed not because the science is weak, but because the English isn’t sufficiently polished.

A journal’s English language guidance appearing right beside an advertisement for a language editing service, as shown in Figure 2, signals this reality, even if the journal does not explicitly state the obvious. This kind of gatekeeping reinforces colonial legacies in academia, where Western norms of writing and expression dominate what counts as “good science.” Requiring expensive editing services under the guise of quality control creates a paywall for participation.

LLMs, on the other hand, can empower more researchers to communicate their ideas clearly and confidently—if they’re allowed to use them.

Comparative studies between LLM-based proofreading and human editing show that both can substantially improve clarity and coherence.

The takeaway? Both have value, but one is far more accessible.

The debates around AI in academic writing often unfold in terms of ethics, integrity, authorship, and misconduct. But behind these policy discussions, how are researchers navigating the daily realities of publishing in a system that is linguistically uneven and structurally biased?

To understand how AI tools are actually being used on the ground, we spoke with 12 mid-career Indian researchers across various disciplines and institutional settings, all of whom have long-term experience in scientific publishing. Their experiences echoed the concerns outlined above.

The majority now regularly use ChatGPT or Grammarly to refine grammar, improve sentence flow, restructure paragraphs, or adapt manuscripts to different journal styles. Scientific reasoning, data interpretation, and conclusions, they insisted, should be human driven. Several noted that AI-generated scientific interpretations often sound polished but are shallow, generic, or inaccurate. One researcher put it this way: “I mostly use these tools as language models … not as a thinker. They are particularly helpful in framing sentences, improving flow, refining grammar, and making the narrative more coherent and reader friendly.” AI is not doing the science for them, but helping them communicate. As one researcher also highlighted, AI is now quite helpful in literature review and other aspects of manuscript development

For these non-native English speakers, language has long been a major structural barrier to publication. Researchers described spending months rephrasing sentences, paying for professional editing services, or receiving repeated reviewer comments about “poor English,” even when the science was sound. Some noted that reviewers often associate authors’ geographic location with linguistic weakness, thereby reinforcing bias regardless of the quality of the content.

In this context, AI tools are described by the researchers not as shortcuts, but as equalizers. They reduce time-to-submission, lower financial burdens, and allow researchers to focus on ideas rather than articulation. For early-career scholars and those in resource-limited institutions, AI-based language assistance often replaces services that were previously inaccessible or prohibitively expensive.

Yet they are not sure if the way they are using AI is permissible.

Some researchers said that, in principle, even minimal use of AI should be disclosed. But some voiced anxiety. Would disclosing AI-assisted language editing bias editors or reviewers? Would it be interpreted as a weakness, or, worse, as evidence of compromised scientific rigor, considering that their work is already met with suspicion?

As one respondent said, disclosure feels safe only if it is non-punitive.

Authors want clear and function-based policies, rules that distinguish between acceptable uses (editing, clarity, grammar) and unacceptable ones (data generation, analysis, interpretation). Interviewees consistently argued that AI should never be an author, reinforcing the necessity of human accountability and responsibility for scientific claims. Many compared AI to earlier technological shifts: calculators, statistical software, and computers. Powerful tools that accelerate work, but do not replace expertise.

At the same time, concerns about over-dependence, homogenized academic voice, and risks to reproducibility surfaced. AI, interviewees stressed, must remain subordinate to human judgement.

What frustrates these researchers is not regulation, but ambiguity. If publishers are serious about equity, inclusion, and global participation in science, these lived experiences cannot be ignored. Instead of focusing only on transparency, we need clearer norms about when AI use is ethically unproblematic and when it crosses into questionable territory.

In the table below, we’ve mapped a spectrum of AI use cases in academic writing—situations in which authors often find themselves uncertain about what is acceptable—and our proposed guidance for what should be disclosed, and how.

AI-Use Spectrum: When and What to Disclose

| # | Category | Description | Disclosure | Examples |

|---|---|---|---|---|

| 1 | AI as a supportive technical tool | No intellectual contribution, low risk, minimal ethical concern | Permissible | Grammar correction, spelling checks, language polishing. Improving clarity and readability without altering meaning. Formatting references, citations, and manuscripts. |

| 2 | AI contributes more substantially under human supervision | More ambiguity—augmenting human efforts, ethical concern increases | Some ambiguity | Human-written draft polished by AI for narrative and writing style. AI adjusts tone of a paragraph written by a human. |

| 3 | AI contributes in data analysis under human supervision | AI doing more complex work, all under human supervision and cross-verification | Declare use | AI used in data analysis workflows: graphs, pattern recognition, under human supervision. Supporting literature discovery or thematic clustering (not interpretation). |

| 4 | Intelligent prompts; whole paper drafted by human-AI interactions | Entering gray zone—AI used more extensively, with human-AI exchange throughout, more automation | Gray zone | LLMs draft substantial manuscript sections with some human intellectual input and verification. AI-assisted literature reviews with human verification, cross-checked by human. |

| 5 | Fully AI-generated from text to data to analysis to result | Strict no-zone—AI used without human in loop, mostly automation | Not permitted | LLMs draft substantial manuscript sections without human intellectual input. AI writes entire literature reviews entirely by prompting, without transparent verification. |

Categories 1–2 represent lower-risk AI use. Category 3 requires disclosure. Categories 4–5 enter ethically sensitive territory where full transparency or avoidance is expected.

The key takeaway: the use of AI in academic writing runs the spectrum from applications comparable to traditional editorial services that should clearly be permissible to situations in which AI is used largely without intellectual input from humans, which should clearly be prohibited. The space in between—in which AI more substantively augments human effort in the creation of a text, contributes data analysis under human supervision, or is used more extensively and automatically to, for example, draft substantial sections of manuscripts with some intellectual input and verification from humans—requires more nuanced conversation.

In our interviews with researchers, some consensus emerged around several recommendations for how to make publisher guidelines on AI use clear, effective, and fair:

At its core, this debate is not about tools, but about fairness. Requiring costly human English editing services disproportionately disadvantages scholars from the Global South, non-native speakers, and underfunded institutions. By updating their AI use policies to acknowledge responsible, transparent use of LLMs for language improvement, publishers can remove a needless barrier to participation in global research.

Science thrives on diversity of ideas and perspectives. Language, and the means to refine it, should not be a gatekeeper.

10.1146/katina-040926-1

Copyright © 2025 by the author(s).

This work is licensed under a Creative Commons Attribution Noncommerical 4.0 International License, which permits use, distribution, and reproduction in any medium for noncommercial purposes, provided the original author and source are credited. See credit lines of images or other third-party material in this article for license information.